November’s over and we’re making great headway towards finalizing the new Panda3D version. We hope that our next announcement will be the release announcement for 1.10.0! This month, many bugfixes and maintenance changes have been made to get things ready, and we have finally finished the long-awaited overhaul of the input system.

New input framework

It has been in development for a long time, but the work on the input-overhaul branch has come to completion and has been merged into the master branch. This feature has been requested for a long time and finally brings a unified API for handling game controllers and other input devices into the core library.

The new input framework unifies the various types of gaming input devices into a single abstraction. This means Panda3D now supports many different types of game controller out of the box. It also marks an important milestone for the development of better support for consumer virtual reality headsets as well as support for multi-touch devices, such as mobile phones, which will be based on top of this abstraction layer.

In the new API, there are two ways to discover devices: one is to enumerate the list of input devices via base.devices, and the other is to listen for the connect-device/disconnect-device event pair, which are fired whenever a device is added to or removed from the computer, respectively. The combination of these methods allows you to dynamically switch the input source in your game depending on which device is active at any one time. When a device is found that the game can use, it can be hooked up to the data graph to make sure that it is polled every frame and that events are sent out for any button presses.

This feature is supported on all the major operating systems so far. On Windows, it leverages both XInput and the “raw input” API, on Linux it uses the evdev kernel interface, and on macOS it uses the core IOKit interfaces to implement support for a wide variety of input devices. The existing VRPN interfaces have been integrated with the new abstractions as well.

The manual pages describing the new API are still under construction, but we’ve added several sample programs to the repository that showcase how easy it is to interface with game devices. While the feature seems to be quite stable, we’d like to know your experience using it in your game projects, so let us know about any issues you run into or things we could improve. In particular, we’d like to know how well it works with your input devices, since we’ve only been able to test it with the devices we have access to.

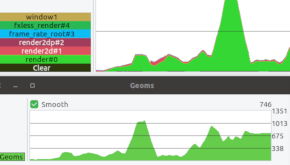

Profiling improvements

In order to help with debugging and profiling Panda applications, we’ve made a few changes to PStats to better categorize the different scene graphs that are being rendered. This will make it easier to see which parts of your scene are taking a while to render. For people who use external tools like apitrace to debug aspects of the rendering process, we’ve also made things quite a bit easier by making use of the OpenGL debug group extensions to delineate the different parts that are being rendered.

GLSL improvements

A few new shader inputs have been added for GLSL: the p3d_Fog structure, which makes it easier to implement fog in your own shader, and the p3d_FragData fragment shader output, which makes it possible to write GLSL 1.30 shaders that make use of multiple render targets.

Whereas it was already possible to utilize the GLSL preprocessor in Panda3D for supporting #include statements and the like, this ability has been extended to procedurally generated shaders loaded via Shader.make. For example, this allows you to write various shader functions as part of a shader library, and then combine these different functions procedurally in the code. We have great plans to bring the shader pipeline up to the next level by adding additional features in the next release.